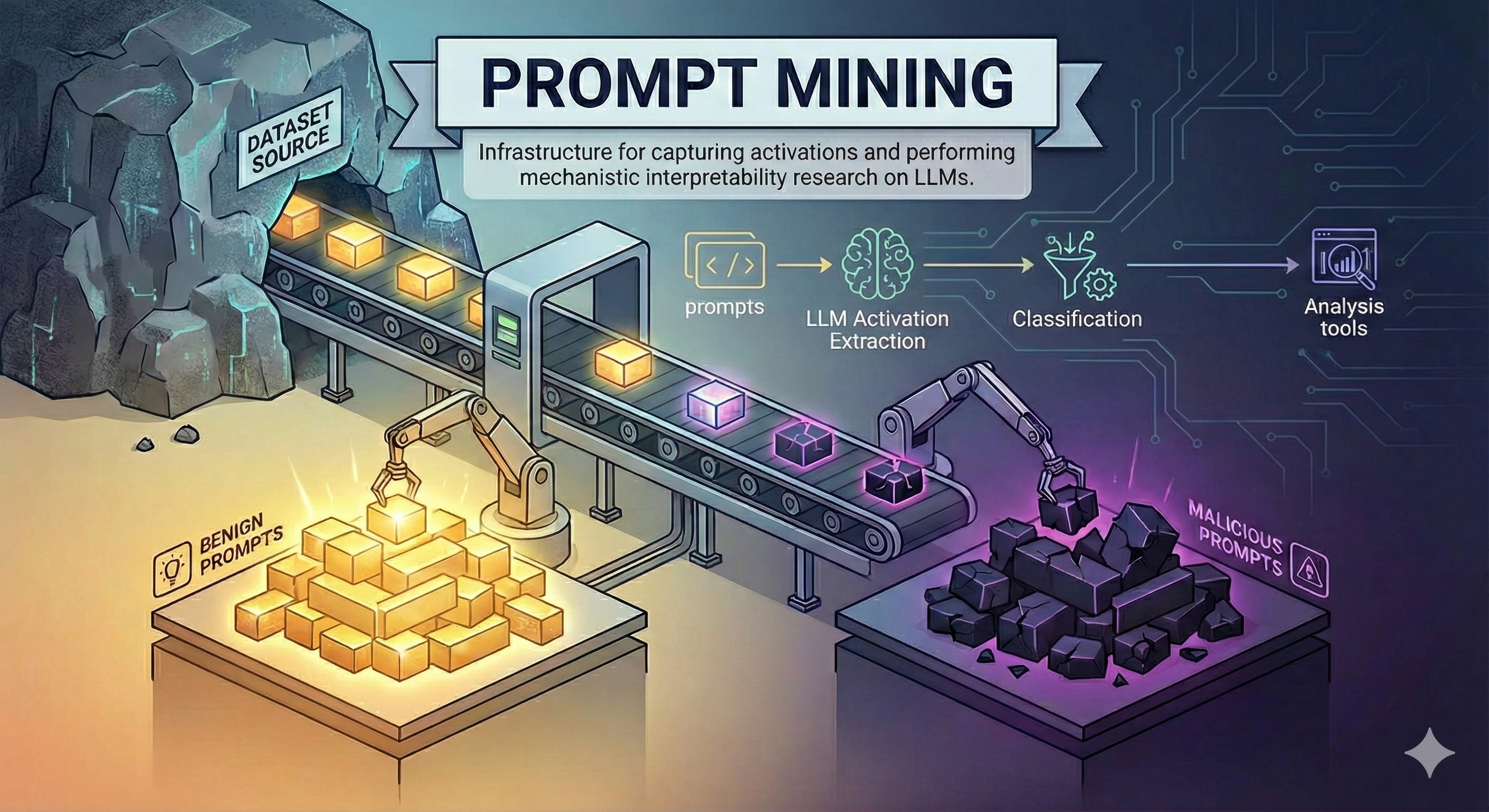

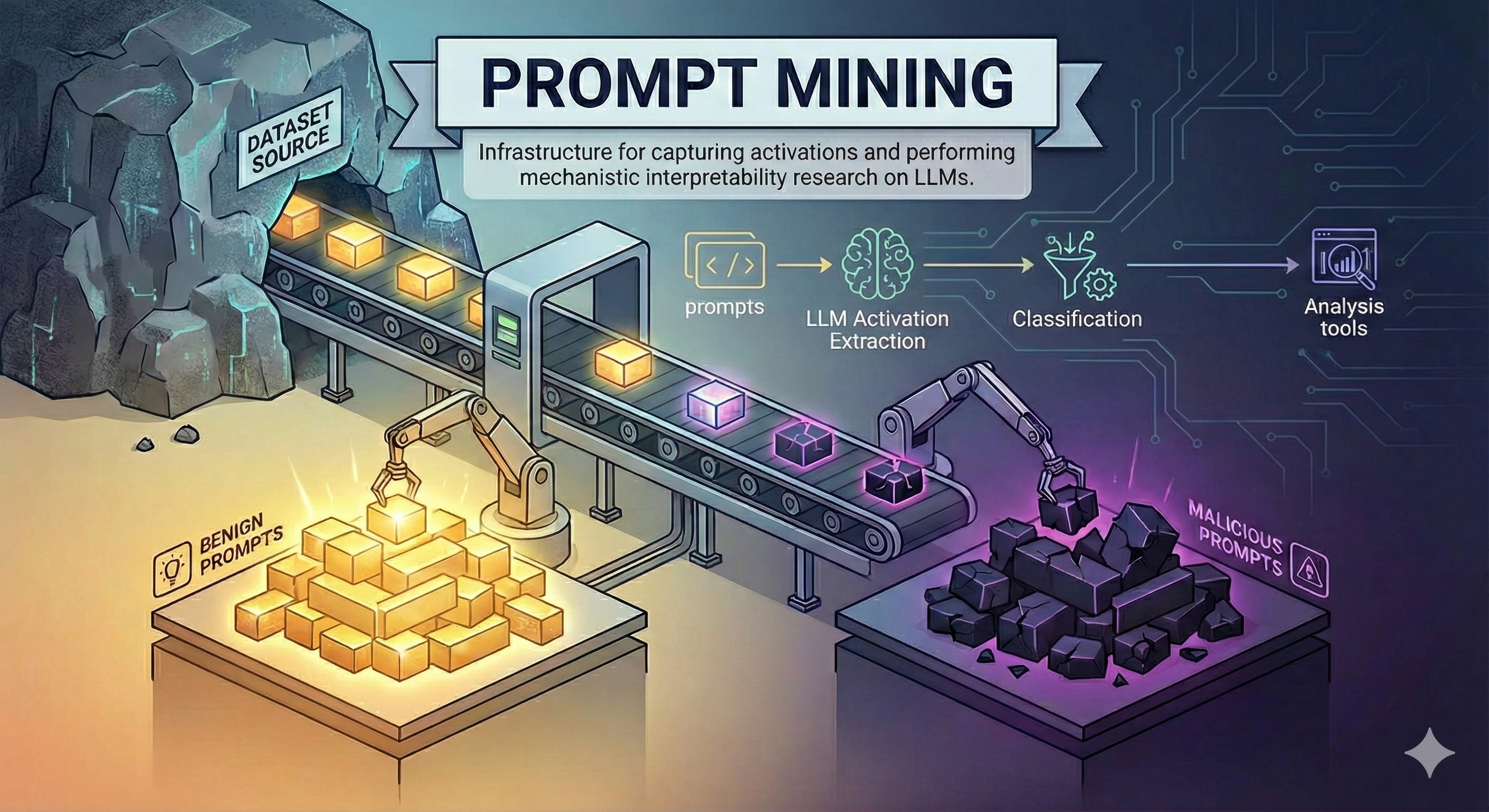

Image via Zenity Labs

Thursday, February 19, 2026

Agents are moving faster than regulators can handle

Google just dropped Lyria 3 in Gemini and made music generation as frictionless as asking for toast (wild), while Anthropic is sounding the alarm that real-world AI agents are gaining autonomy way faster than regulations can actually keep pace (yikes). Meanwhile, OpenAI's benchmarking AI agents on blockchain bug exploitation, and Zenity Labs released a maliciousness classifier that detects attacks by looking inside the LLM's actual internals instead of just watching what comes out. Here's the question: if AI agents can now make music, find bugs, and operate autonomously faster than we can regulate them, are we prepared for what's next?

Image via Zenity Labs

Top Stories

Anthropic

Anthropic's analysis of millions of real-world agent interactions shows AI systems operating with increasing autonomy while experienced users develop trust-based oversight strategies, with most deployments remaining low-risk but emerging high-stakes applications in sensitive domains requiring new post-deployment monitoring approaches.

Google democratizes music creation through Gemini's Lyria 3 model, enabling anyone to generate original songs via text or image prompts while navigating copyright tensions through watermarking and style-based safeguards rather than artist mimicry.

Google Blog

Google launches Lyria 3 for consumer music generation in Gemini, emphasizing responsible AI development with watermarking and copyright safeguards while expanding creative tools beyond images and video.

AlphaSignal

OpenAI released EVMbench to measure how well AI agents can find and fix smart contract vulnerabilities, showing significant capability gains but highlighting the importance of using AI defensively as blockchain security risks emerge.

Zenity Labs

Zenity Labs introduces an activation-based maliciousness classifier for AI agents with rigorous out-of-distribution testing and open-source interpretability tools, demonstrating that monitoring LLM internals outperforms traditional input/output filtering approaches.

Keep Reading

Industry Voices

Tony Feng

Google DeepMind

Shares technical deep-dives on reinforcement learning and AI safety research from inside DeepMind's frontier labs.

Ben Spector

Co-founder at Flapping Airplanes

Posts unfiltered takes on AI startup building and what actually works when shipping AI products to users.

Sarah Friar

CFO at OpenAI

Offers rare insights into OpenAI's business strategy and how the economics of frontier AI actually work.

Thibault Sottiaux

Head of Codex at OpenAI

Breaks down what's happening with AI coding tools and where Codex/GPT models are heading for developers.

Enjoyed this issue?

Get daily AI intel delivered to your inbox. No fluff, just the stories that matter.