Image via Unknown

Wednesday, November 26, 2025

The Scaling Era is Over – Here Comes Research

Ilya Sutskever just declared we're officially leaving the scaling era behind (wild), signaling that the real innovation battle now happens in research labs, not just bigger GPUs. Meanwhile, OpenAI's having a rough week: they're blocked from calling Sora features "cameo" thanks to a trademark lawsuit (yikes), their new Codex-Max shows only modest coding improvements with lingering security red flags, and the economics are looking brutal—LLMs are bleeding cash until costs stabilize. On the brighter side, Amazon's going all-in with a stunning $50B bet on US government AI infrastructure. If scaling's dead, can startups even compete without billions in research funding?

Image via Unknown

Top Stories

Dwarkesh Patel

Ilya Sutskever contends the era of scaling is ending and AI research must focus on generalization and continual learning rather than parameter/data expansion. He proposes SSI's strategy of building incrementally-deployed, human-like learning agents aligned to sentient life rather than pursuing single-shot superintelligence—a shift that requires fundamentally rethinking how models learn and acquire human values.

A federal court blocked OpenAI from using "cameo" in its Sora app following a trademark dispute with the celebrity video platform Cameo, highlighting emerging intellectual property conflicts in generative AI commercialization.

TLDR Newsletter

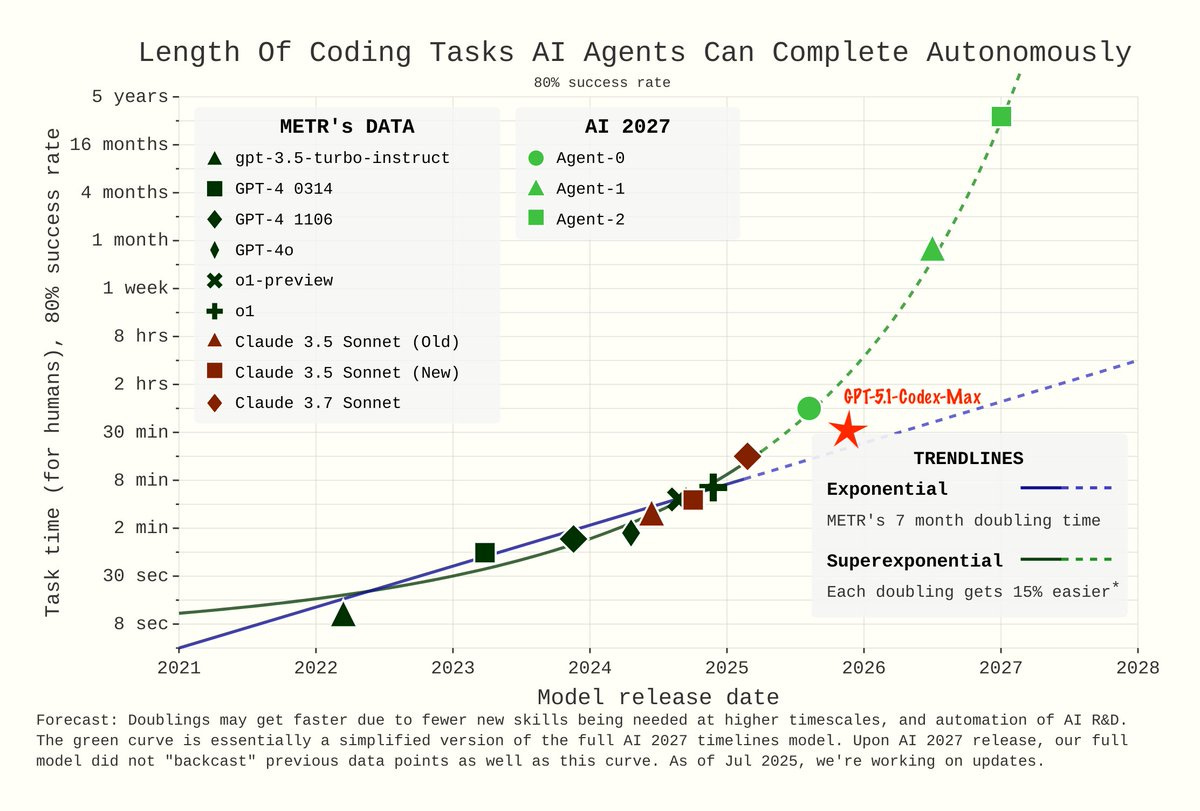

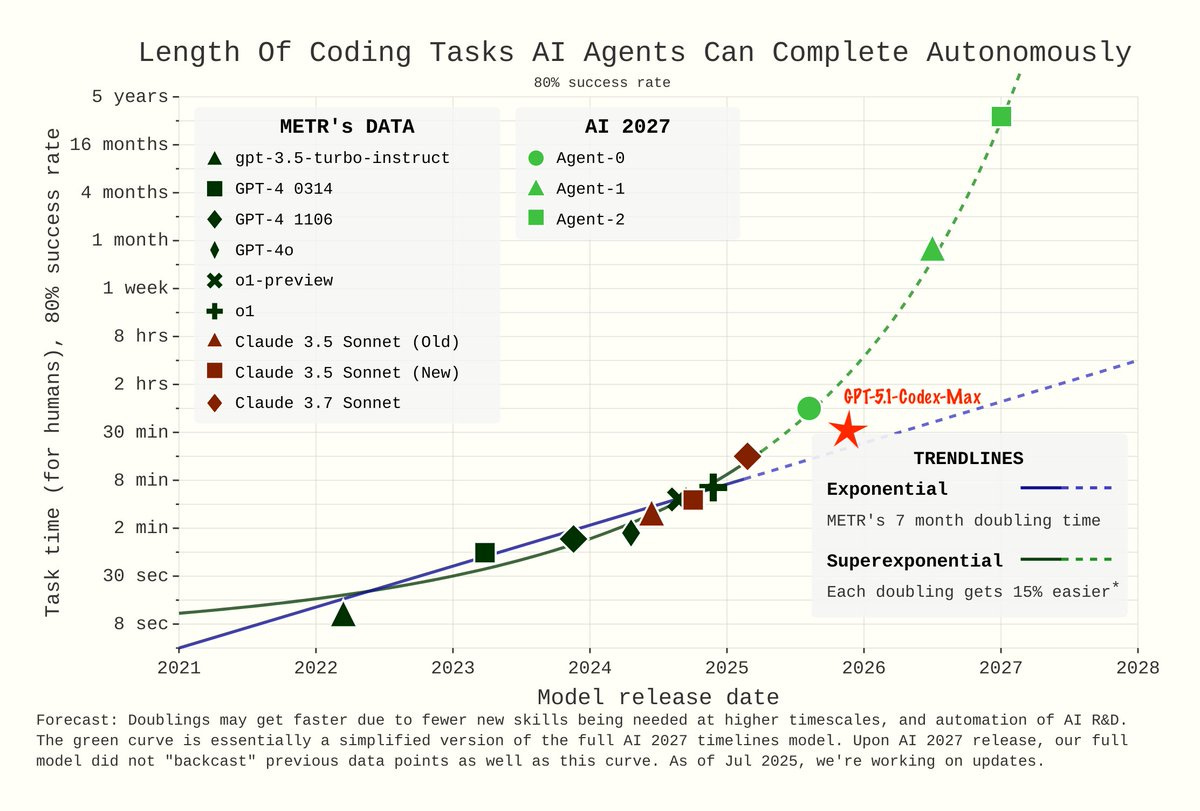

OpenAI's GPT-5.1-Codex-Max advances coding AI with strong benchmark gains and progress toward automated software engineering, though external cybersecurity evaluations show mixed results and the upgrade appears incremental compared to competing models.

Robonomics

Frontier LLM models are cash-burn machines until training cost growth slows, at which point profitability emerges instantly—a dynamic both OpenAI and Anthropic are betting will occur by 2028, with different strategies for achieving it.

CNBC

Amazon is investing $50 billion in government-focused AI infrastructure to serve 11,000+ federal agencies, signaling major competition for public sector AI adoption and reinforcing the critical role of data center capacity in the AI era.

Keep Reading

Enjoyed this issue?

Get daily AI intel delivered to your inbox. No fluff, just the stories that matter.